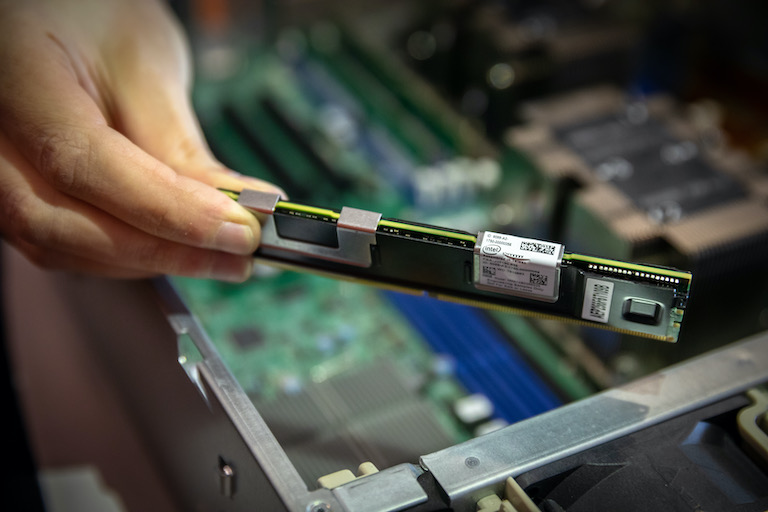

The NVSL builds cutting edge systems to understand how non-volatile memories (and other storage, memory, networking, and programming technologies) will shape future computer systems and give us the performance we need to enable next-generation applications.

More importantly, we train researchers and builders that can recognize the full-system impact of new technologies and translate that understanding into useful systems.